Last week Facebook announced the Open Compute Project (Perspectives, Facebook). I linked to the detailed specs in my general notes on Perspectives and said I would follow up with more detail on key components and design decisions I thought were particularly noteworthy. In this post we’ll go through the mechanical design in detail.

As long time readers of this blog will know, PUE has many issues (PUE is still broken and I still use it) and is mercilessly gamed in marketing literature (PUE and tPUE). The Facebook published literature predicts that this center will deliver a PUE of 1.07. Ignoring the power requirements of the mechanical systems, just delivering power to the servers at a 7% loss is a considerable challenge. I’m slightly skeptical of this number as a fully loaded, annual PUE number but I can say with confidence that it is one of the nicest mechanical designs I have come across. Hats off to Jay Park and the rest of the Facebook DC design team.

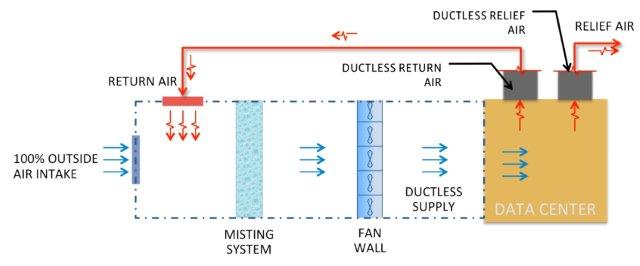

Perhaps the best way to understand the mechanical design is to first walk through the mechanical system diagram and then step through the actual deployment in pictures.

In the mechanical system diagram you will first note there is no process based cooling (no air conditioning) and no chilled water loop. It’s a 100% air cooled with all IT equipment cooling via outside air which is particularly effective with the favorable weather conditions experienced in central Oregon.

The outside air is pulled in from outside, mixed with a controlled volume of return air to avoid over-cooling in winter, filtered, evaporative cooled, and run through a fan wall before being returned to the cold aisles below.

Air Intake

The cooling system takes up the entire second floor of the facility with the IT equipment and office space on the first floor. In the picture below, you’ll see the building air intake on the left running the full width of the facility. This intake area in the picture is effectively “outside” so includes water drains in the floor. In the picture to the right towards the top, you’ll see the air path to the remainder of the air handling system. To the right at the bottom, you’ll see the white sheet metal sections covering the passage where hot exhaust air is brought up from the hot aisle below to be mixed with outside air to prevent over-cooling on cold days.

Mixing Plenum & Filtration:

In the next picture, you can see the next part of the full building air handling system. On the left the louvers at the top are bringing in outside air from the outside air intake room. On the left at the bottom, there are thermostatically controlled louvers allowing hot exhaust air to come up from the IT equipment hot aisle. The hot and outside air are mixed in this full room plenum before passing through the filtration wall visible on the right side of the picture.

The facility grounds are still being worked upon so the filtration system includes an extra set of disposable paper filters to increase the life of the more aggressive filtration media not visible behind.

Misting Evaporative Cooling

In the next picture below you’ll see the temperature and humidity controlled high-pressure water misting system again running the full width of the facility. Most evaporative cooling systems used in data center applications use wet media. This system used high pressure water with stainless steel nozzles to produce an atomized mist. These designs produce excellent cooling (high delta-T) but are prone to calcification and maintenance without aggressive filtration which brings some expense. But they are very effective.

Just beyond the misters, you’ll see what looks like filtration media. This media is there to ensure no airborne water makes it out of the cooling room.

Exhaust System

In the final picture below, you’ll see we have now got to the other side of the building where the exhaust fans are found. We bring air in on one side, filter, cool it, and pump it, and then just before the exhaust fans visible in the picture, huge openings in the floor alow treated, cooled air to be brough down to the IT equipment cold aisle below.

The exhaust fans visible in the picture control pressure by exhausting air not needed back outside.

The Facebook facility has considerable similarity to the design EcoCooling mechanical design I posted last Monday (Example of Efficient Mechanical System Design). Both approaches use the entire building as the air ducting system and both designs use evaporative cooling. The notable differences are: 1) EcoCooling design is based upon wet media whereas Facebook is using a high pressure water misting system, and 2) the EcoCooling design is running the mechanical systems beside the compute floor whereas the Facebook design uses the second floor above the IT rooms for all mechanical system.

The most interesting aspects of the Facebook mechanical design: 1) full building ducting with huge plenum areas, 2) no-process based cooling, 3) mist-based evaporative cooling, 4) large, efficient impellers with variable frequency drive, and 4) full wall, low-resistance filtration.

I’ve been saying for years that mechanical systems are where the largest opportunities for improvement lie and are the area where most innovation is most required. The Facebook Prineville Facility is most of the way there and one of the most efficient mechanical system designs I’ve come across. Very elegant and very efficient.

–jrh

b: http://blog.mvdirona.com / http://perspectives.mvdirona.com

Mike said "I thought you meant actual data, and not this one single project. As far as I can tell, OpenCompute is very similar to the specs Google gave out in 2009 (http://news.cnet.com/8301-1001_3-10209580-92.html). Overall, this release isn’t that big of a deal."

I respectfully disagree Mike. The Facebook release is about current generation gear just going into service and includes enough data and supplier information to duplicate what they did. The Google release in 2009 was very useful but what was released was of gear and datacenter designs several generations back and no longer being deployed. Both are useful and both are noteworthy but the Facebook release really is different.

–jrh

Jose said "The only bit I did not like is using those fans to push "unwanted" air back outside". I think you are right and I’m pretty sure those fans rarely run. None of that set where on the entire time I was in that area during my visit.

–jrh

Thanks, James. When you said, "Facebook has released more data than almost any company ever," I thought you meant actual data, and not this one single project. As far as I can tell, OpenCompute is very similar to the specs Google gave out in 2009 (http://news.cnet.com/8301-1001_3-10209580-92.html). Overall, this release isn’t that big of a deal.

Don’t get me wrong: it’s a tremendously impressive system and a feat of Engineering. But anyone running at scale came to this conclusion a while ago. I’m curious if anyone will actually adopt or use this information from Facebook.

Thank *you* for your writeup on this.

Nice. The only bit I did not like is using those fans to push "unwanted" air back outside. You spend energy to condition the air (in the pump feeding the misters, as far as I can glean from the test and the pictures) and then spend more energy driving some of that air out of the building.

Mike, here’s a references that walks through all the data Facebook released:

//perspectives.mvdirona.com/2011/04/07/OpenComputeProject.aspx

This is the main site for Open Compute: http://opencompute.org/

–jrh

James – what kind of data has Facebook released?

I do know the power and server numbers but they have not been released publically and I respect that. Facebook has released more data than almost any company ever so I’m not crtisizing on this point.

–jrh

James, any info available on total number of servers that will be in Pineville or total square footage?

Thanks.

Microsoft and others have moved from ISO standard shipping containers to pre-assembled modules — essentially prefab building components. ISO containers end up being too narrow for two full rows of servers but too wide for a single row.

why build a building rather than use pre-assembled components or other modular design? They both work. I’m a pretty big supporter of modular systems but data center costs are dominated by power distribution and mechanical systems so the cost of the building is relevant but not that significant. If higher scale, larger, full-building designs are more efficient at scale they might win. Modules should always win at low-scale.

–jrh

Why not build the evaporative cooling system and air filters into ISO containers with the servers like Microsoft is doing, and vent the heat out of a sloping roofed tin shed? That way your cooling scales with your compute load.

And given their privacy record, I assume a tin shed is enough data security for Facebook.

Sorry the lack of detail in that section Manju.

The exhaust area is has huge openings in the floor which allow the air to pass through to the cold aisles of the servers below. Essentially the second floor is largely open to the first in this area and most of the air, having entered one end of the building, flowed through filters, misters, and fans, is now directly below to cool the IT equipment.

–jrh

Interesting article..

Would have liked to see more details in the section "Exhaust System" Expand on the comment "huge openings in the floor alow treated, cooled air to be brough down to the IT equipment cold aisle below." to dive into air circulation flow in the IT equipment cold aisle.

MRB, you asked about over-cooling. Its not a big deal as long as the temp changes are relatively slow. And, as you point out, cold air can carry less moisture and we have to stay non-condensing. Admitted this isn’t a huge issues in that cold air is usually quite dry.

Hot air recirculation is used nearly universally in air-side economized designs because its free, avoids possible humidity issues, and avoids rapid temperature change problems.

–jrh

James, what are the dangers of over-cooling? Loss of humidity?

Benjamin asked, "Is this the Rutherfordton Data center in North Carolina?" No, this is the new Facebook facility in Prineville Oregon.

–jrh

agree the design is neat! Anyway… somehow it reminds me the chicken factories … :|

Is this the Rutherfordton Data center in North Carolina.