Jeff Dean did a great talk at Google IO this year. Some key points from Steve Garrity (msft pm) and some note from the excellent write-up at Google spotlights data center inner workings:

· many unreliable servers to fewer high cost servers

· Single search query touches 700 to up to 1k machines in < 0.25sec

· 36 data centers containing > 800K servers

o 40 servers/rack

· Typical H/W failures: Install 1000 machines and in 1 year you’ll see: 1000+ HD failures, 20 mini switch failures, 5 full switch failures, 1 PDU failure

· There are more than 200 Google File System clusters

· The largest BigTable instance manages about 6 petabytes of data spread across thousands of machines

· MapReduce is increasing used within Google.

o 29,000 jobs in August 2004 and 2.2 million in September 2007

o Average time to complete a job has dropped from 634 seconds to 395 seconds

o Output of MapReduce tasks has risen from 193 terabytes to 14,018 terabytes

· Typical day will run about 100,000 MapReduce jobs

o each occupies about 400 servers

o takes about 5 to 10 minutes to finish

More detail on the typical failures during the first year of a cluster from Jeff:

· ~0.5 overheating (power down most machines in <5 mins, ~1-2 days to recover)

· ~1 PDU failure (~500-1000 machines suddenly disappear, ~6 hours to come back)

· ~1 rack-move (plenty of warning, ~500-1000 machines powered down, ~6 hours)

· ~1 network rewiring (rolling ~5% of machines down over 2-day span)

· ~20 rack failures (40-80 machines instantly disappear, 1-6 hours to get back)

· ~5 racks go wonky (40-80 machines see 50% packetloss)

· ~8 network maintenances (4 might cause ~30-minute random connectivity losses)

· ~12 router reloads (takes out DNS and external vips for a couple minutes)

· ~3 router failures (have to immediately pull traffic for an hour)

· ~dozens of minor 30-second blips for dns

· ~1000 individual machine failures

· ~thousands of hard drive failures

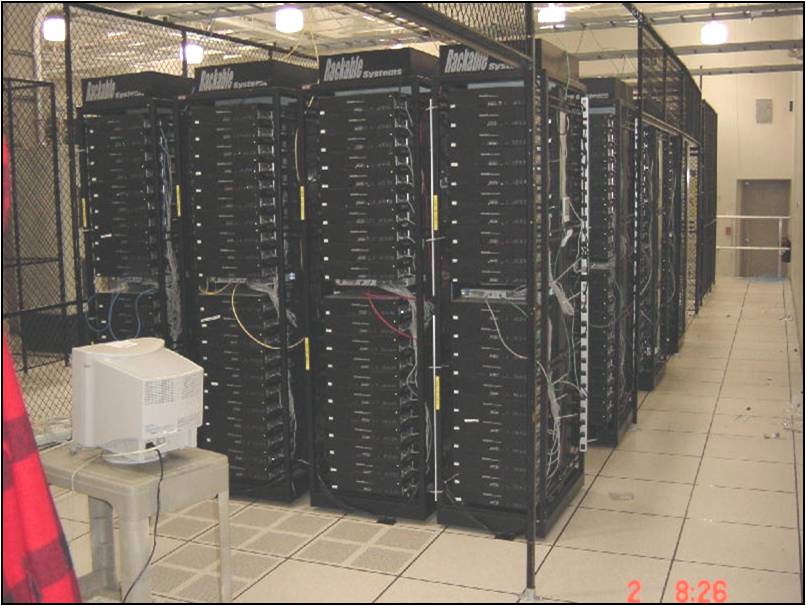

A pictorial history of Google hardware through the years starting with the current generation server hardware and working backwards from Jeff’s talk at the 2007 Seattle Scalability Conference:

Current Generation Google Servers

Google Servers 2001

Google Servers 2000

Google Servers 1999

Google Servers 1997

My general rule on hardware is that, if you have a viewing window into the data center, you are probably spending too much on servers. The Google model of cheap servers with software redundancy is the only economic solution at scale.

Other notes from Google IO:

· http://perspectives.mvdirona.com/2008/05/29/RoughNotesFromSelectedSessionsAtGoogleIODay1.aspx

· http://perspectives.mvdirona.com/2008/05/30/IO2008RoughNotesFromSelectedSessionsAtGoogleIODay2.aspx

All pictures above courtesy of Jeff Dean.

–jrh

James Hamilton, Windows Live Platform Services

Bldg RedW-D/2072, One Microsoft Way, Redmond, Washington, 98052

W:+1(425)703-9972 | C:+1(206)910-4692 | H:+1(206)201-1859 | JamesRH@microsoft.com

H:mvdirona.com | W:research.microsoft.com/~jamesrh | blog:http://perspectives.mvdirona.com

It would be interesting to know what the cost of cooling is

"2) even VERY good hardware isn’t good enough to avoid having to implement redundancy in software to mask failures."

Absolutely! Sadly there’s still a contingent of people that believe it’s possible to build a decent sized platform that doesn’t nail the software as the result of a hardware failure. A point I like to counter with figures such as those above. It also helps when an architect on the Microsoft Windows Live Platform wades in with some clarity. :)

Google does use lower quality hardware at a MUCH lower cost so, yes, they will see more hardware related failures. The two points that I find interesting are 1) hardware failures contribute the minority of outages — software and administration are much bigger culprits, and 2) even VERY good hardware isn’t good enough to avoid having to implement redundancy in software to mask failures. Once you have the reduncancy in software and can withstand hardware failures, why spend more to get good hardware?

My view is that they are on the right path: Lots of inexpensive hardware with redunancy at the application and infrastructure level.

–jrh

Just wondering if these failure figures are at all skewed by Google choosing to build it’s own racks……