Netflix is super interesting in that they are running at extraordinary scale, are a leader in the move to the cloud, and Adrian Cockcroft, the Netflix Director of Cloud Architecture, is always interesting in presentations. In this presentation Adrian covers similar material to his HPTS 2011 talk I saw last month.

His slides are up at: http://www.slideshare.net/adrianco/global-netflix-platform and my rough notes follow:

· Netflix has 20 milion streaming members

o Currently in US, Canada, and Latin America

o Soon to be in UK and Ireleand

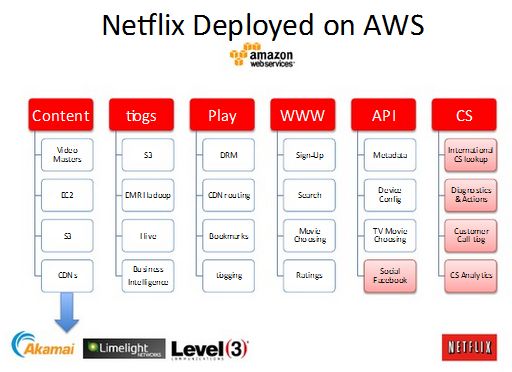

· Netflix is 100% public cloud hosted

· Why did Netflix move from their own high-scale facility to a public cloud?

o Better business agility

o Netflix was unable build datacenters fast enough

o Capacity growth was both accelerating and unpredictable

o Product launch spikes require massive new capacity (iPhone, Wii, PS3, & Xbox)

Netflix grew 37x from Jan 2010 through Jan 2011

· Why did Netflix choose AWS as their cloud solution?

o Chose AWS using Netflix own platform and tools

o Netflix has unique platform requirements and extreme scale needing both agility & flexibility

o Chose AWS partly because it was the biggest public cloud

§ Wanted to leverage AWS investment in features and automation

§ Wanted to use AWS availability zones and regions for availability, scalability, and global deployment

§ Didn’t want to be the biggest customer on a small cloud

o But isn’t Amazon a competitor?

§ Many products that compete with Amazon run on AWS

§ Netflix is the “poster child” for the AWS Architecture

§ One of the biggest AWS customers

§ Netflix strategy: turn competitors into partners

o Could Netflix use a different cloud from AWS

§ Would be nice and Netflix already uses 3 interchangeable CDN vendors

§ But no one else has the scale and features of AWS

· “you have to be tall to ride this ride”

· Perhaps in 2 to 3 years?

o “We want to use cloud, we don’t want to build them”

§ Public clouds for agility and scale

§ We use electricity too but we don’t want to build a power station

§ AWS because they are big enough to allocated thousands of instances per hour when needed

o Netflix Global PaaS

§ Supports all AWS Availability Zones and Regions

§ Supports multiple AWS accounts (test, prod, etc.)

§ Supports cross Regions and cross account data replication & archiving

§ Supports fine grained security with dynamic AWS keys

§ Autoscales to thousands of instances

§ Monitoring for millions of metrics

o Portals and explorers:

§ Netflix Application Console (NAC): Primary AWS provisioning & config interface

§ AWS Usage Analyzer: cost breakdown by application and resource

§ SimpleDB Explorer: browse domains, items, attributes, values,…

§ Cassandra Explorer: browse clusters, keyspaces, column families, …

§ Base Service Explorer: browse endpoints, configs, perf metrics, …

o Netflix Platform Services:

§ Discovery: Service Register for applications

§ Introspections: Endpoints

§ Cryptex: Dynamic security key management

§ Geo: Geographic IP lookup engine

§ Platform Serivce: Dynamic property configuration

§ Localization: manage and lookup local translations

§ EVcache: Eccetric Volatile (mem)Cached

§ Cassadra: Persistence

§ Zookeeper: Coordination

o Netflix Persistence Services:

§ SimpleDB: Netflix moving to Cassandra

· Latencies typically over 10msec

§ S3: using the JetS3t based interface with Netflix changes and updates

§ Eccentric Volatile Cache (evcache)

· Discovery aware memcached based backend

· Client abstractions for zone aware replication

· Supports option to write to all zones, fast read from local

· On average, latencies of under 1 msec

§ Cassandra

· Chose because they value availability over consistency

· On average, latency of “few microseconds”

§ MongoDB

§ MySQti: supports hard to scale, legacy, and small relational models

o Implemented a Multi-Regional Data Replication system:

§ Oracle to SimpleDB and queued reverse path usingj SQS

o High Availability:

§ Cassandra stores 3 local copies, 1 per availability zone

§ Each AWS availability zone is a separate building with separate power etc. but still fairly close together so synchronous access is practical

§ Synchronous access, durable, and highly available

Adrian’s slide deck is posted at: http://www.slideshare.net/adrianco/global-netflix-platform.

–jrh

James Hamilton